May 7, 2026

How to Fix Webflow Crawl Errors and Improve Indexing

.webp)

Table of content

Transform your website with expert Webflow development

From brand identity to Webflow development and marketing, we handle it all. Trusted by 50+ global startups and teams.

Search engine visibility is critical for any business that relies on organic traffic. Yet many companies using Webflow discover their websites aren't reaching their full potential in search results. One of the most overlooked causes of poor search performance is unaddressed crawl errors. When search engine bots encounter problems accessing your pages, they can't properly index your content, resulting in missed opportunities for visibility and traffic.

Webflow, as a powerful web design and hosting platform, provides excellent tools for building responsive websites. However, like any platform, it requires proper configuration to ensure search engines can crawl and index your content effectively. Whether you're managing your own Webflow website or working with a webflow development agency, understanding how to identify and fix crawl errors is essential for achieving strong search rankings.

This comprehensive guide walks you through the most common Webflow crawl errors, explains why they occur, and provides actionable solutions to improve your site's indexability and overall search engine optimization performance.

Understanding Webflow Crawl Errors

Before diving into fixes, it's important to understand what crawl errors are and why they matter. Crawl errors occur when search engine bots attempt to access pages on your website but encounter obstacles that prevent them from reading the content. These obstacles can range from broken links and server errors to misconfigured robots.txt files or excessive redirects.

The impact of crawl errors extends beyond just indexing. When search engines struggle to crawl your site efficiently, they allocate less of their crawl budget to discovering new content and fresh updates. This means your valuable pages might not be indexed at all, or updates might take significantly longer to be recognized in search results.

Webflow sites are particularly susceptible to certain types of crawl errors because of how the platform handles hosting, SSL certificates, and dynamic content. Understanding the platform-specific issues helps you address them more effectively and improve your site's search performance.

Common Types of Webflow Crawl Errors

Server Errors and HTTP Status Codes

The most frequently encountered crawl errors on Webflow sites involve server response codes in the 5xx range. These indicate that the server encountered an error while processing the request. Common 5xx errors include 500 (Internal Server Error), 502 (Bad Gateway), and 503 (Service Unavailable).

When search bots receive these responses, they mark the pages as inaccessible. If this happens repeatedly, search engines will crawl those pages less frequently, reducing their chances of being indexed or re-indexed with fresh content.

To diagnose server errors, access your Google Search Console account and navigate to the Coverage report. This tool provides detailed information about which pages are experiencing server errors and when these errors occur. Additionally, use tools like Screaming Frog or SEMrush to crawl your site independently and identify problematic pages.

Redirect Chains and Loops

Redirect chains occur when one URL redirects to another URL, which in turn redirects to a third URL, creating an inefficient path to the final destination. Redirect loops happen when URL A redirects to URL B, and URL B redirects back to URL A, creating an infinite loop that prevents access to either page.

Webflow development often involves restructuring site architecture and updating URL patterns. During these transitions, redirect chains frequently develop if not carefully managed. Each redirect consumes crawl budget and slows down the process of reaching the actual content, negatively impacting both user experience and search engine crawlability.

To fix redirect chains, review your redirect rules in Webflow's project settings and ensure you're redirecting directly to the final destination rather than creating intermediate redirects.

SSL Certificate Issues

HTTPS is now a Google ranking factor, and all Webflow sites come with free SSL certificates by default. However, certain configurations can create SSL-related crawl errors. Mixed content errors occur when your site loads resources (images, scripts, stylesheets) over HTTP when the main page uses HTTPS.

These issues prevent search engines from properly crawling and indexing your content because browsers and search engine bots block insecure resources for security reasons. Webflow typically handles SSL setup automatically, but custom code or third-party integrations sometimes introduce mixed content issues.

Robots.txt Problems

Your robots.txt file instructs search engines which parts of your site they should and shouldn't crawl. Misconfigured robots.txt files can accidentally block entire sections of your site from being indexed, creating unnecessary crawl errors.

Common mistakes include blocking entire directories, using overly broad rules, or accidentally blocking CSS and JavaScript files. When these files can't be accessed, search engines have difficulty rendering and understanding your content, treating many pages as having crawl errors.

Diagnosing Crawl Errors in Google Search Console

Google Search Console is your primary tool for identifying crawl errors on your Webflow site. The platform provides detailed insights into how Google's bots interact with your website.

Start by accessing the Coverage report under the Indexing section. This report displays four categories: Error, Valid with warnings, Valid, and Excluded. Focus on pages with errors first, as these represent the most significant barriers to indexation.

The Coverage report shows you specific error types, the number of affected pages, and when the errors were first detected. Click on each error type to see which URLs are experiencing problems. This information is crucial for prioritizing your fixes.

The URL Inspection tool allows you to check individual pages. It shows you exactly how Google's crawler sees your page, what it rendered, and whether it encountered any issues. Use this tool to verify that your fixes are working correctly after you implement them.

Fixing Webflow Crawl Errors

Resolving Server Errors

When you identify server errors in Google Search Console, first check Webflow's status page to confirm that the platform isn't experiencing widespread outages. If the issue is isolated to your site, review your project settings and recent changes.

Server errors often stem from misconfigured hosting settings, SSL certificate issues, or resource conflicts. Try clearing your browser cache and accessing your site from different locations to determine if the error is persistent. If the error persists, contact Webflow support with details from Google Search Console, as the issue may require platform-level intervention.

Implement proper error monitoring using tools like Sentry or New Relic to catch server errors before search engines encounter them. These services alert you immediately when errors occur, allowing you to fix problems quickly.

Cleaning Up Redirects

Audit all redirects on your Webflow site systematically. Export your sitemap and check each URL to identify any redirect chains. A tool like Screaming Frog can automatically detect redirect chains and loops, saving you significant time.

Once you've identified problem redirects, update them in Webflow's project settings to point directly to the final destination. Delete any temporary redirect rules that are no longer necessary. Each unnecessary redirect wastes crawl budget, so minimize the total number of redirects on your site.

For permanent changes to your site structure, use 301 redirects rather than temporary 302 redirects. This ensures that link equity and ranking signals transfer to the new URLs, preserving your search visibility during the transition.

Fixing Mixed Content Issues

If you're experiencing mixed content warnings, audit your site for HTTP resources. Check your CSS files, JavaScript includes, and image sources to ensure they all use HTTPS URLs. In Webflow's designer, update any custom code that references external resources to use secure HTTPS connections.

If you've integrated third-party services, verify that they provide HTTPS endpoints. If a vendor only offers HTTP access, consider finding an alternative service that supports secure connections.

Correcting Robots.txt Configuration

Review your robots.txt file in Webflow's SEO settings. A well-optimized robots.txt should allow access to your entire site unless you have specific pages you want to exclude. Avoid blocking CSS, JavaScript, and image resources, as these are essential for proper page rendering.

Check whether you're accidentally disallowing important directories. For example, some configurations block the /collections/ directory in dynamic sites, preventing search engines from accessing product or blog post pages. Adjust your rules to allow search engine access to all content you want indexed.

After making changes, test your robots.txt using Google Search Console's robots.txt tester tool. This ensures that your updates achieve the desired effect without unintended consequences.

Addressing XML Sitemap Issues

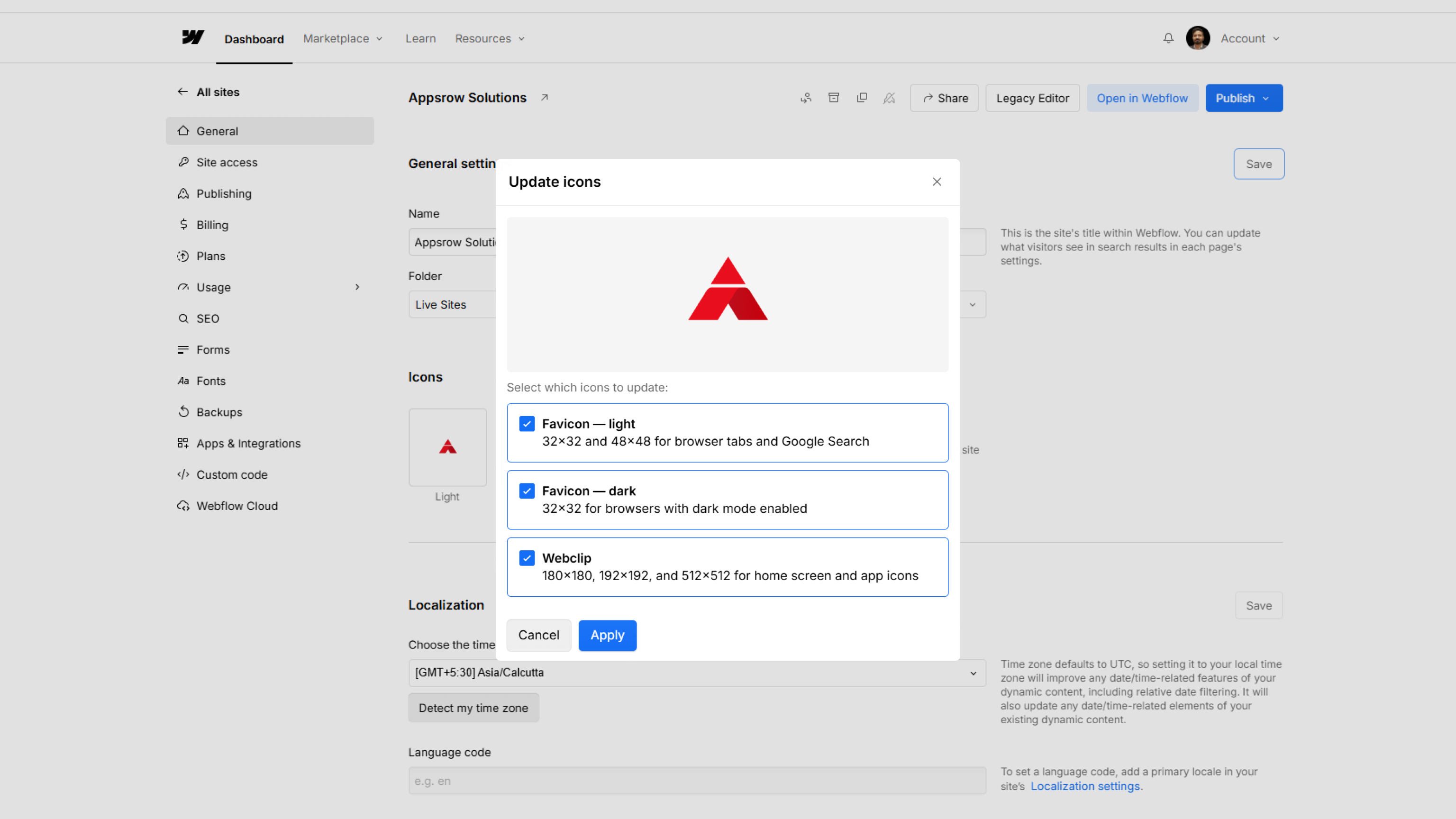

Your XML sitemap serves as a roadmap for search engines to discover all your pages. In Webflow, the platform automatically generates a sitemap, but you should verify it includes all important pages and contains no broken URLs.

Access your sitemap at yoursite.com/sitemap.xml and scan it for any 404 errors or redirect chains within the sitemap itself. Remove any outdated URLs and ensure all URLs in the sitemap are accessible and not blocked by robots.txt.

Submit your sitemap to Google Search Console, and monitor it regularly for issues. Webflow updates your sitemap automatically when you publish changes, so you don't need to manually recreate it with each update.

Optimizing Your Site's Crawlability

Improving Site Structure and Navigation

A clear, logical site structure helps search engines discover and understand your content. Organize your Webflow site with a consistent hierarchy where important pages are only a few clicks from the homepage. Use descriptive folder and collection names that reflect your content organization.

Include breadcrumb navigation on your pages, both for user experience and SEO benefits. Breadcrumbs help search engines understand the relationship between pages and provide another path for bot discovery.

Enhancing Internal Linking

Internal links guide both users and search engine bots through your site. Each internal link provides another opportunity for search engines to discover pages and understand the structure of your content. Use descriptive anchor text that accurately describes the linked page rather than generic phrases like "click here."

Create a strategic internal linking plan that connects related content. When you publish a new blog post, link to existing posts on similar topics. Link from high-authority pages to important conversion pages to concentrate ranking signals where they matter most.

Optimizing Page Load Speed

Page speed is both a ranking factor and an important aspect of crawlability. Slow loading pages consume more crawl budget because bots wait longer for pages to fully load. Webflow's hosting infrastructure is generally fast, but you can further optimize performance.

Compress images before uploading them to Webflow. Use modern image formats like WebP for faster delivery. Minimize the use of custom scripts and external resources that can slow down page loading. Run your site through Google PageSpeed Insights regularly and address the recommendations provided.

Creating an XML Sitemap

While Webflow automatically generates sitemaps, ensure you're actively submitting your sitemap to search engines. A properly formatted sitemap helps search engines find all your content quickly, reducing crawl errors related to discovery.

Include important pages in your sitemap but exclude duplicate content, outdated pages, and pages behind paywalls or login screens. Webflow's automatic sitemap generation handles most of this, but review it periodically to ensure it reflects your current site structure.

Monitoring and Maintaining Crawlability

Using Google Search Console Effectively

Check your Search Console account at least weekly during the first month after implementing changes, then monthly thereafter. Monitor the Coverage report for new errors and track how the number of valid pages changes over time.

The Performance report shows you which queries bring traffic from search results. If you notice important keywords aren't appearing in search results, investigate whether those pages are being crawled and indexed. Use the URL Inspection tool to check their status.

Implementing Regular Crawl Audits

Beyond Google Search Console, conduct regular technical audits using third-party tools. SEMrush, Ahrefs, and Screaming Frog provide comprehensive crawl reports that identify issues Google Search Console might not surface.

Schedule quarterly audits to catch new errors before they impact your search visibility. Document your findings and track progress as you implement fixes. This systematic approach ensures continuous improvement to your site's technical SEO.

Tracking Indexation Metrics

Monitor your indexation progress through multiple channels. In Google Search Console, track the number of pages indexed over time. Watch for sudden drops, which indicate new technical issues or unintended changes affecting crawlability.

Use the URL Inspection tool to spot check random pages across your site. This helps you verify that your fixes are working and that new problems aren't developing in other areas.

Webflow Development Best Practices for Search Engine Optimization

When working with a webflow development agency or managing development in house, implement practices that prevent crawl errors before they occur. These preventive measures save significant time and effort compared to fixing errors after they develop.

Use Webflow's built-in SEO settings for each page, including meta titles and descriptions that accurately describe your content. Enable the sitemap feature and ensure all important pages are included. Configure your robots.txt file deliberately rather than relying on default settings.

Test changes thoroughly in a staging environment before publishing to your live site. Use Search Console's URL Inspection tool to preview how Google will see your pages before going live. This catches issues early when they're still easy to fix.

Establish a regular maintenance schedule for your Webflow site that includes checking for new crawl errors and monitoring overall indexation health. Assign responsibility for SEO maintenance to ensure it doesn't get neglected amid other project demands.

Conclusion

Webflow crawl errors represent a common but fixable obstacle to search engine visibility. By understanding the types of errors that affect Webflow sites and implementing the solutions outlined in this guide, you can ensure that search engines can effectively crawl and index your content.

The path to improved search rankings starts with a healthy, crawlable website. Regular monitoring through Google Search Console, systematic diagnosis of errors, and prompt implementation of fixes create a foundation for long term organic growth. Whether you manage your Webflow site independently or work with a webflow development agency, prioritizing technical health pays dividends through improved visibility and increased organic traffic.

Take action today by accessing your Google Search Console account, reviewing your Coverage report, and implementing the fixes that address your site's specific issues. Monitor your progress over the coming weeks and months, and celebrate the improved search rankings that result from your efforts. A crawlable, well optimized Webflow site isn't just good for search engines, it's good for your users and your business success.

Frequently asked questions

Your XML sitemap — automatically generated by Webflow at yoursite.com/sitemap.xml and updated every time you publish — provides search engines with a structured list of all the pages on your site that you want indexed. It acts as a direct roadmap for Googlebot, ensuring that even pages with few or no internal links pointing to them are still discovered and crawled. However, the sitemap's effectiveness depends on its accuracy. A sitemap containing 404 error pages, redirect chains, or URLs blocked by robots.txt confuses crawlers and wastes crawl budget. You should regularly verify that every URL in your sitemap is accessible, returns a 200 status code, and is not blocked by any technical rules. Submitting your sitemap to Google Search Console allows you to monitor its health and see how many pages from it have been successfully indexed. Our Webflow Maintenance service monitors sitemap health continuously and flags any new issues introduced by content additions or structural changes.

There are several possible reasons a published Webflow page may not appear in Google search results. The page may be blocked by your robots.txt file, preventing Googlebot from accessing it. A noindex meta tag may have been applied to the page — either intentionally during development and never removed, or accidentally through a Webflow page setting. The page may simply not have been crawled yet, especially if it was recently published and has no internal links pointing to it. The page URL may have previously returned a crawl error that caused Google to reduce its crawl frequency for that URL. Or the page may exist in a redirect chain that prevents Google from reaching the canonical version. Use Google Search Console's URL Inspection tool to check the exact status of any page and identify which of these conditions applies. Our Webflow SEO team diagnoses and resolves indexing failures systematically, identifying the root cause for each affected page rather than applying generic fixes.

Your robots.txt file, accessible at yoursite.com/robots.txt, tells search engine bots which parts of your site to crawl and which to skip. Common misconfigurations include accidentally blocking entire content directories like /blog/ or /collections/, blocking CSS and JavaScript files that Google needs to render your pages correctly, or using overly broad disallow rules that exclude more than intended. In Webflow, you can edit your robots.txt directly within the SEO settings of your project. After making changes, use Google Search Console's robots.txt tester to confirm that the updated rules allow access to all the content you want indexed without unintended exclusions. A properly configured robots.txt should block only admin pages, duplicate content, internal search result pages, and other non-public areas — never your main content pages. Our Webflow Development team reviews and configures robots.txt as a standard step on every new build and migration to ensure it is precisely targeted from day one.

Crawl budget is the number of pages Googlebot will crawl on your site within a given time period. Google allocates this budget based on your site's overall health, authority, and server performance. When your site has crawl errors — broken pages, slow load times, redirect chains, or blocked resources — Google spends more of your crawl budget trying to process those problematic pages, leaving less budget available to discover and re-index your valuable content. For small sites with under 100 pages, crawl budget is rarely a limiting factor. For larger Webflow sites with hundreds of CMS-driven pages, blog posts, and landing pages, efficient crawl budget management becomes critical to ensuring all content gets indexed promptly. Our Webflow SEO service addresses crawl budget efficiency as a core technical priority, particularly for content-heavy sites where timely indexing directly affects organic traffic performance.

Redirect chains occur when URL A redirects to URL B, which then redirects to URL C before finally reaching the destination — creating unnecessary steps that waste crawl budget and slow down link equity transfer. Redirect loops happen when two or more URLs redirect to each other in a circle, making the destination permanently unreachable for both users and search engine bots. Every additional redirect in a chain consumes part of your site's crawl budget, meaning Google visits fewer of your pages per crawl cycle. For sites that have undergone redesigns, URL restructuring, or platform migrations, redirect chains are especially common. The correct fix is always to update each redirect to point directly to the final destination, eliminating all intermediate hops. Our Webflow Migration service manages the complete redirect mapping process during platform transitions, ensuring every old URL points cleanly to the correct new destination with no chains or loops.

Webflow sites are particularly prone to five categories of crawl errors. Server errors in the 5xx range — including 500 Internal Server Error, 502 Bad Gateway, and 503 Service Unavailable — prevent bots from accessing pages and cause them to be crawled less frequently over time. Redirect chains and loops develop during site restructuring when multiple redirects stack on top of each other instead of pointing directly to the final destination. Mixed content errors arise when custom code or third-party integrations load resources over HTTP on pages that use HTTPS. Robots.txt misconfigurations accidentally block important content directories from being crawled. XML sitemap issues — including broken URLs, redirect chains within the sitemap itself, or pages excluded from the sitemap entirely — prevent efficient page discovery. Our Webflow Development team implements preventive technical standards from the very first build to eliminate these issues before they ever reach a live site.

Google Search Console is the primary tool for identifying crawl errors. Navigate to the Coverage report under the Indexing section — it categorizes your pages into Errors, Valid with Warnings, Valid, and Excluded. The Error category shows pages that Google could not index and explains why. For each error type listed, you can drill down to see the specific URLs affected and when the errors were first detected. The URL Inspection tool within Search Console lets you check individual pages to see exactly how Google rendered them and whether any access issues were encountered. Third-party tools like Screaming Frog, SEMrush, and Ahrefs provide additional crawl reports that often surface issues Search Console does not flag. Our Webflow Maintenance service conducts quarterly crawl audits using both Google Search Console and third-party tools to catch new errors before they compound into larger indexing problems.

Crawl errors occur when search engine bots — like Google's Googlebot — attempt to access pages on your Webflow site but encounter obstacles that prevent them from reading the content. These obstacles can include broken links, server errors, misconfigured robots.txt rules, redirect chains, or SSL issues. When search engines cannot crawl your pages, they cannot index your content — meaning your pages simply do not appear in search results, no matter how good the content is. Crawl errors also waste your site's crawl budget, causing Google to visit fewer pages per crawl cycle and slowing down the discovery of new content. Our Webflow SEO service begins every engagement with a full technical crawl audit to identify and resolve every barrier standing between your content and Google's index.

Recent Insights

Leading Webflow development company for high-growth brands.

From brand identity to Webflow development and marketing, we handle it all. Trusted by 50+ global startups and teams.